RAG in 2025: From Static Retrieval to Intelligent Knowledge Systems

Retrieval-Augmented Generation (RAG) has rapidly become a core architecture for enterprise AI applications. In its early days, RAG was mainly used to connect large language models (LLMs) with external documents. However, as we move into 2025, RAG is no longer just about “retrieval + generation.”

It is evolving into a smarter, more adaptive knowledge system that can reason, update, and optimize itself over time.

This article explores how RAG is transforming in 2025 and what that means for enterprises building AI-driven products and internal systems.

1. The Limits of Traditional RAG

Early RAG implementations followed a relatively static workflow:

- Documents are indexed and stored in a vector database

- User queries are embedded and matched using similarity search

- Retrieved chunks are passed to an LLM for response generation

While effective, this approach has several limitations:

- Retrieval quality depends heavily on chunking strategy and embeddings

- Context selection is often shallow and keyword-driven

- Knowledge updates require manual re-indexing

- The system lacks awareness of user intent or task complexity

As enterprise use cases grow more complex, these constraints become increasingly visible.

2. RAG Becomes Context-Aware

In 2025, RAG systems are shifting from static retrieval toward context-aware retrieval.

Modern RAG pipelines can now:

- Analyze user intent before retrieval

- Dynamically adjust retrieval strategies based on query type

- Combine semantic search with metadata, structure, and business rules

For example, a factual query, a troubleshooting request, and a strategic question should not be handled by the same retrieval logic. Smarter RAG systems recognize these differences and retrieve information accordingly.

3. Multi-Source and Hybrid Retrieval

Another major evolution is the move beyond a single knowledge source.

Instead of relying only on vector databases, RAG systems in 2025 can retrieve from:

- Structured databases (SQL, data warehouses)

- APIs and real-time systems

- Internal documents and manuals

- Logs, tickets, and operational data

Hybrid retrieval methods combine vector search, keyword search, and symbolic reasoning to improve accuracy and relevance—especially for enterprise environments where data diversity is the norm.

4. Continuous Learning and Knowledge Refresh

Traditional RAG systems treat knowledge as static. Once indexed, documents remain unchanged until manually updated.

Smarter RAG systems introduce continuous knowledge refresh, enabling them to:

- Detect outdated or low-quality content

- Automatically re-index or reprioritize documents

- Learn from user feedback and interaction logs

This turns RAG from a passive retrieval layer into an actively improving system, better aligned with real business usage.

5. Agent-Based RAG Architectures

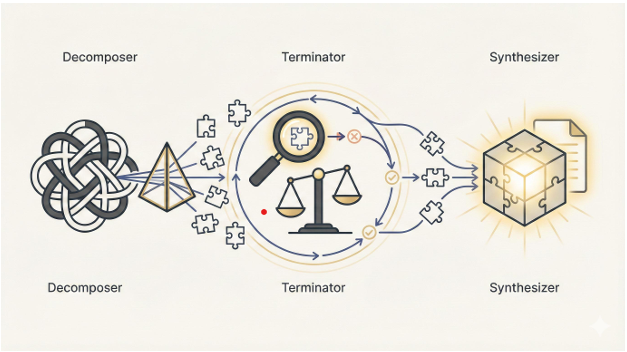

One of the most important trends in 2025 is the integration of AI agents with RAG.

Instead of a single retrieval step, agent-based RAG systems can:

- Break down complex queries into sub-tasks

- Perform multiple retrieval cycles

- Validate and compare information from different sources

- Decide when additional retrieval is necessary

This approach significantly improves performance for multi-step reasoning, decision support, and enterprise workflows.

6. Enterprise Impact: Why This Matters

For enterprises, smarter RAG means more than better chatbot answers.

Key benefits include:

- Higher accuracy and reduced hallucinations

- Faster access to internal knowledge

- Better decision support for employees

- Scalable AI systems aligned with business processes

In sectors such as manufacturing, finance, healthcare, and IT services, intelligent RAG systems are becoming a foundation for next-generation AI platforms.

7. Looking Ahead

By 2025, RAG is no longer just an architectural pattern—it is a core capability for enterprise AI.

The shift from static retrieval to intelligent, adaptive knowledge systems marks a critical step toward AI that truly understands context, evolves with data, and supports real-world decision-making.

Organizations that invest early in smarter RAG architectures will be better positioned to build reliable, scalable, and business-ready AI solutions.